|

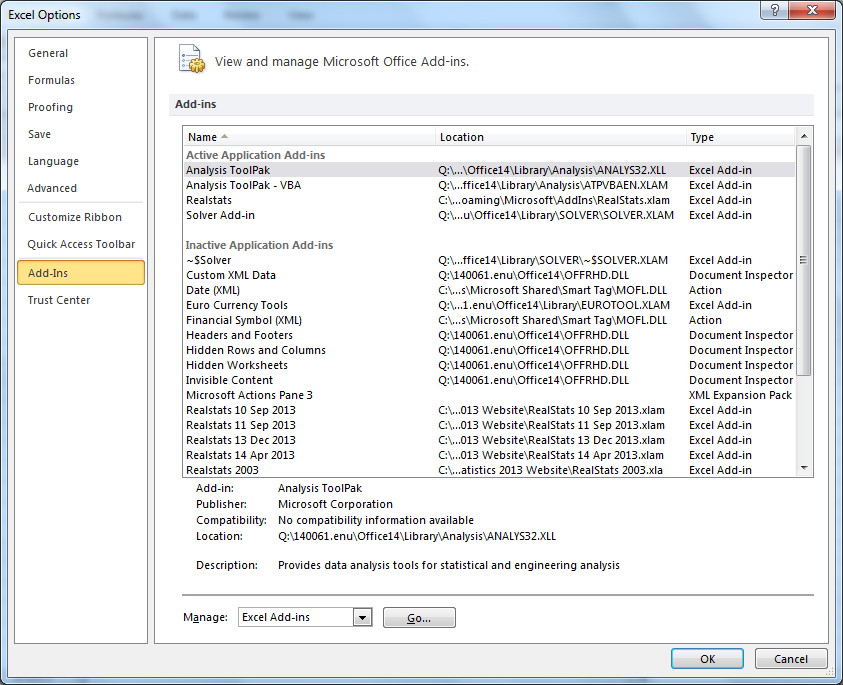

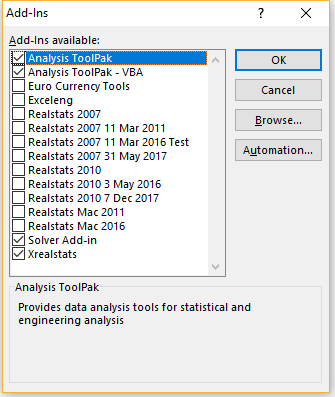

The first thing you have to do, however, is rearrange the data the way StatPlus likes it in columns. You can use StatPlus LE to do analysis of variance in Excel on a Mac. Analysis of Variance for Mac Users of Excel.When we can’t find the Data Analysis button in the toolbar, we must first load the Analysis Toolpak in Mac.Real Statistics Resource Pack for Macintosh. Data Analysis in menu options. The Data Analysis tools can be accessed in the Data tab. Where is Data Analysis in Mac. You can read our Regression Analysis in Financial Modeling article to gain more insight into the statistical concepts employed in the method and where it finds application within finance.Youll work with a variety of data sources, project scenarios, and data analysis tools, including Excel, SQL, Python, Jupyter Notebooks, and Cognos Analytics.This articles assists all levels of Excel users on how to load the Data Analysis Toolpak in Excel Mac. The following figure shows the rearrangement and the recoding.In a previous article, we explored Linear Regression Analysis and its application in financial analysis and modeling.

Running a Multiple Linear RegressionThere are ways to calculate all the relevant statistics in Excel using formulas. Looking at the development over the periods, we can assume that GDP increases together with Education Spend and Employee Compensation. X2 – Unemployment Rate as % of the Labor Force Even before we run our regression model, we notice some dependencies in our data. As a massive fan of Agatha Christie’s Hercule Poirot, let’s direct our attention to Belgium.As you can see in the table below, we have nineteen observations of our target variable (GDP), as well as our three predictor variables: I have also kept the links to the source tables to explore further if you want.The EU dataset gives us information for all member states of the union.I rarely end up using all of them, but it’s easier to delete the ones we don’t need than rerun the whole thing.Now that we have our Summary Output from Excel let’s explore our regression model further.The information we got out of Excel’s Data Analysis module starts with the Regression Statistics.R Square is the most important among those, so we can start by looking at it. A new worksheet usually works best, as the tool inserts quite a lot of data.I will also mark all the additional options at the bottom. A 95% confidence interval is appropriate in most financial analysis scenarios, so we will not change this.You can then consider placing the data on the same sheet or a new one. Please, note that this is the same as running a single linear regression, the only difference being that we choose multiple columns for X Range.Remember that Excel requires that all X variables are in adjacent columns.As I have selected the column Titles, it is crucial to mark the checkbox for Labels. And in the X Range, we will select all X variable columns. Note, we use the same menu for both simple (single) and multiple linear regression models.Now it’s time to set some ranges and settings.The Y Range will include our dependent variable, GDP. Running a Multiple Linear RegressionThere are ways to calculate all the relevant statistics in Excel using formulas. Looking at the development over the periods, we can assume that GDP increases together with Education Spend and Employee Compensation. X2 – Unemployment Rate as % of the Labor Force Even before we run our regression model, we notice some dependencies in our data. As a massive fan of Agatha Christie’s Hercule Poirot, let’s direct our attention to Belgium.As you can see in the table below, we have nineteen observations of our target variable (GDP), as well as our three predictor variables: I have also kept the links to the source tables to explore further if you want.The EU dataset gives us information for all member states of the union.I rarely end up using all of them, but it’s easier to delete the ones we don’t need than rerun the whole thing.Now that we have our Summary Output from Excel let’s explore our regression model further.The information we got out of Excel’s Data Analysis module starts with the Regression Statistics.R Square is the most important among those, so we can start by looking at it. A new worksheet usually works best, as the tool inserts quite a lot of data.I will also mark all the additional options at the bottom. A 95% confidence interval is appropriate in most financial analysis scenarios, so we will not change this.You can then consider placing the data on the same sheet or a new one. Please, note that this is the same as running a single linear regression, the only difference being that we choose multiple columns for X Range.Remember that Excel requires that all X variables are in adjacent columns.As I have selected the column Titles, it is crucial to mark the checkbox for Labels. And in the X Range, we will select all X variable columns. Note, we use the same menu for both simple (single) and multiple linear regression models.Now it’s time to set some ranges and settings.The Y Range will include our dependent variable, GDP.

Statistics For Excel Mac Users OfGenerally, if the coefficient is large compared to the standard error, it is probably statistically significant.The Analysis of Variance section is something we often skip when modeling Regression. I suggest you read this article on Statistics by Jim, to learn why too good is not always right in terms of R Square.The Standard Error gives us an estimate of the standard deviation of the error (residuals). We will continue with our model, but a too-high R Squared can be problematic in a real-life scenario. Such a high value would usually indicate there might be some issue with our model. In other words, 98% of the variability in ŷ (y-hat, our dependent variable predictions) is capture by our model. It gives us an idea of the overall goodness of the fit.An adjusted R Square of 0.98 means our regression model can explain around 98% of the variation of the dependent variable Y (GDP) around the average value of the observations (the mean of our sample). As it is lower than the significance level of 0.05 (at our chosen confidence level of 95%), we can reject the null hypothesis, that all coefficients are equal to zero. The Significance F column shows us the p-value for the F-test. The alternative hypothesis is that at least one of the coefficients is not equal to zero. You can read more about running an ANOVA test and see an example model in our dedicated article.This table gives us an overall test of significance on the regression parameters.The ANOVA table’s F column gives us the overall F-test of the null hypothesis that all coefficients are equal to zero.

Residual OutputThe residuals give information on how far the actual data points (y) deviate from the predicted data points (ŷ), based on our regression model.This table shows the observed values for the independent variable (y) and the corresponding sample percentiles. If it doesn’t, then it’s safe to drop X1 and X2 from the regression model.If we do that, we get the following Regression Statistics.We can see no drop in R Square, so we can safely remove X1 and X2 from our model and simplify it to a single linear regression. We can also confirm this because the value zero lies between the Lower and Upper confidence brackets.We may decide to run the model without the X1 and X2 variables and evaluate whether this results in a significant drop in the adjusted R Square measure. Looking at our X1 to X3 predictors, we notice that only X3 Employee Compensation has a p-value of below 0.05, meaning X1 Education Spend and X2 Unemployment Rate do not seem to be statistically significant for our regression model.As we cannot reject the null hypothesis (that the coefficients are equal to zero), we can eliminate X1 and X2 from the model. We can look at the p-values for each coefficient and compare them to the significance level of 0.05.If our p-value is less than the significance level, this means our independent variable is statistically significant for the model. Download canon utility for macWe can add a Trendline and evaluate if the data points follow a straight line. The closer these match, the better our model predicts the dependent variable based on the regressors.The Normal Probability Plot helps us determine whether the data fit a normal distribution. This shows the predicted values (ŷ) versus the observed values (y). We can observe this visually by assessing whether the points are spread approximately equally below and above the x-axis.The model provides us with one Line Fit Plot for each independent variable (predictor). We can use these plots to evaluate if our sample data fit the variance’s assumptions for linearity and homogeneity.Homogeneity means that the plot should exhibit a random pattern and have a constant vertical spread.Linearity requires that the residuals have a mean of zero. In contrast, TREND and LINEST work the same way as with a single regression model but take values for multiple X variables.We started with three independent variables, performed a regression analysis, and identified that two predictors don’t have statistical significance for our model.We then eliminated those to end up with a Single Linear Regression model.Once you are satisfied with your model you can build your regression equation, as we have discussed in other articles. If we go the functions route, it is crucial to know that Excel functions SLOPE, INTERCEPT, and FORECAST do not work for Multiple Regression. The regression analysis in Excel assumes the error is independent with constant variance (homoskedasticity) We can have up to 16 predictors (I can’t remember where I read that, so take it with caution) Columns for all regressors (independent variables) have to be adjacent

0 Comments

Leave a Reply. |

AuthorEric ArchivesCategories |

RSS Feed

RSS Feed